Sui Objects Tutorial: Managing Parallel Transactions with Object-Centric Model

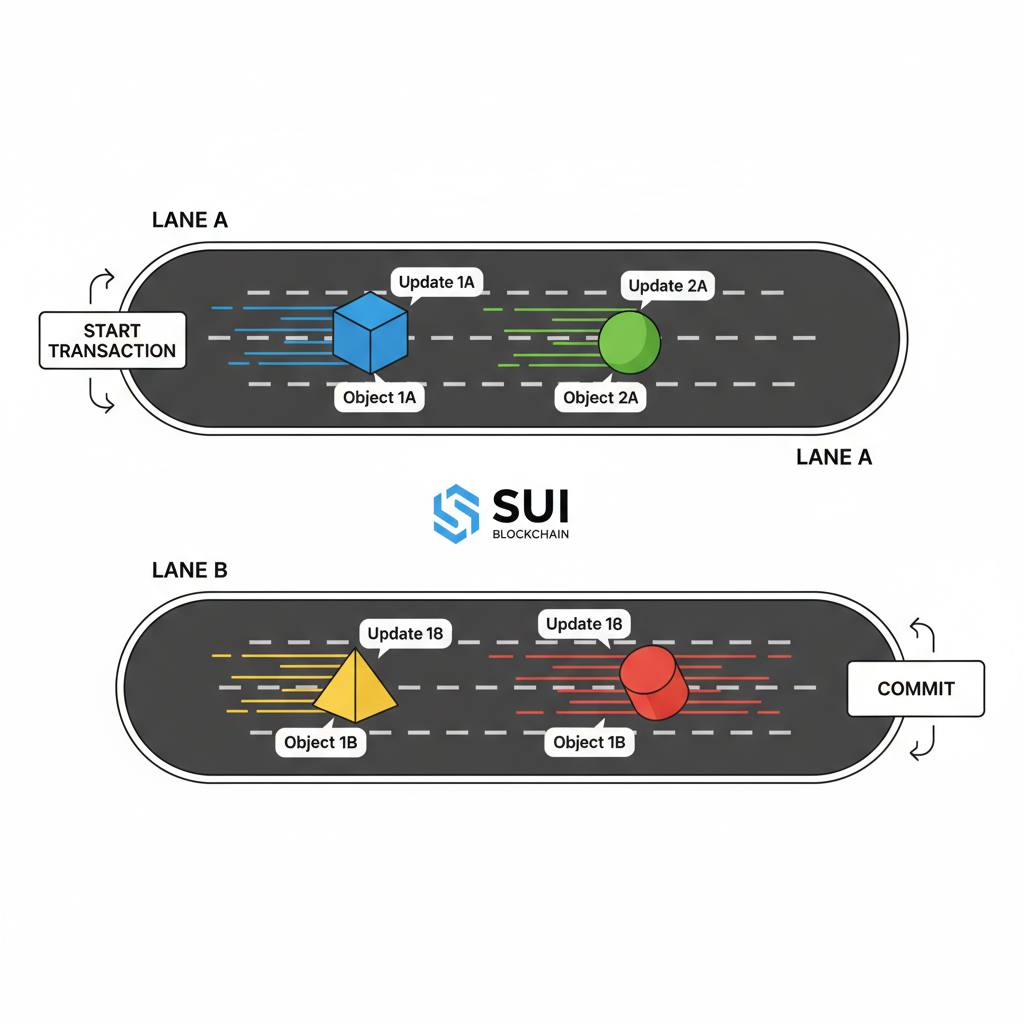

In the congested highways of traditional blockchains, transactions crawl single-file, throttled by global sequencing and account contention. Sui flips this script with its object-centric blockchain, where every asset, from coins to NFTs, exists as a standalone object with a unique ID, owner, and type. This sui object model guide unlocks parallel execution: transactions touching disjoint objects race ahead independently, slashing latency and boosting throughput. Developers wielding Move language craft apps that scale effortlessly, sidestepping the bottlenecks plaguing Ethereum or Solana during peaks. Picture real-time trading bots processing orders in parallel; that’s the edge Sui delivers.

Decoding Sui Objects: Independence at the Core

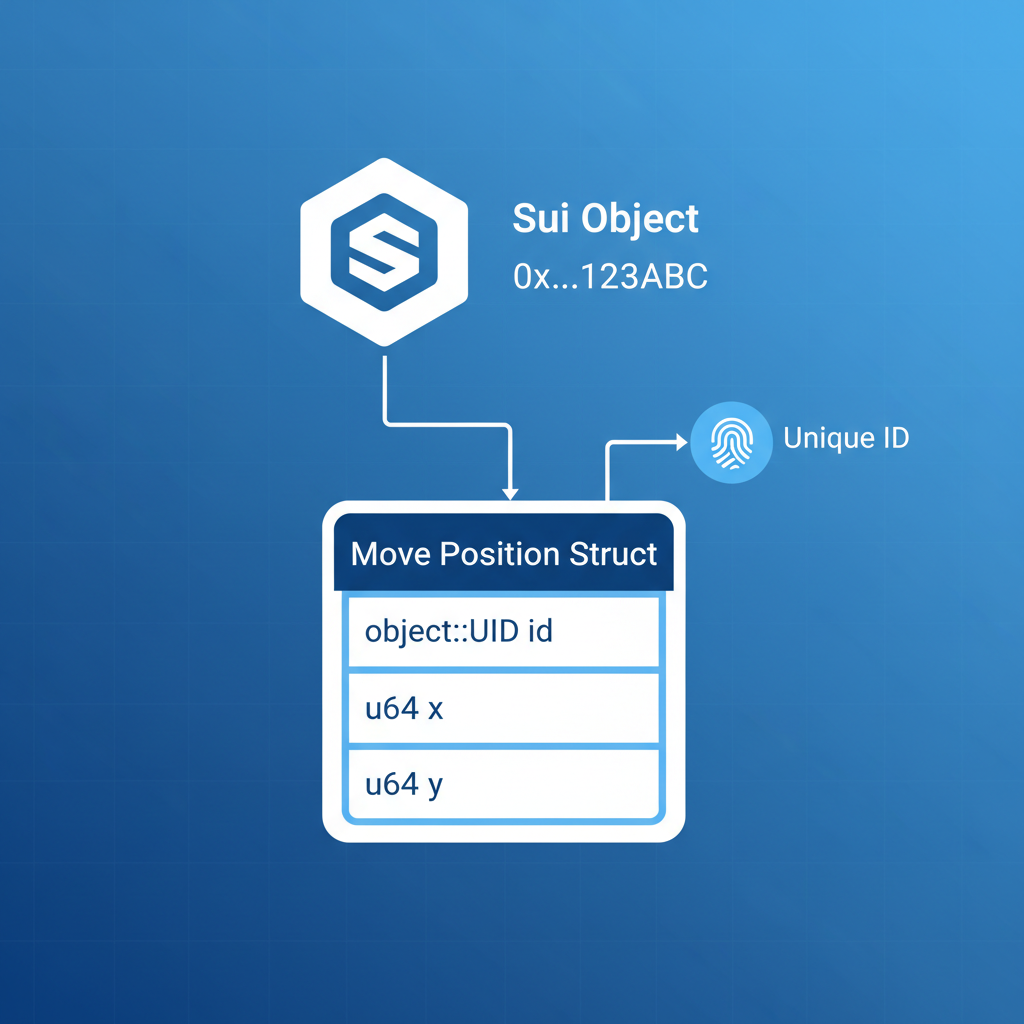

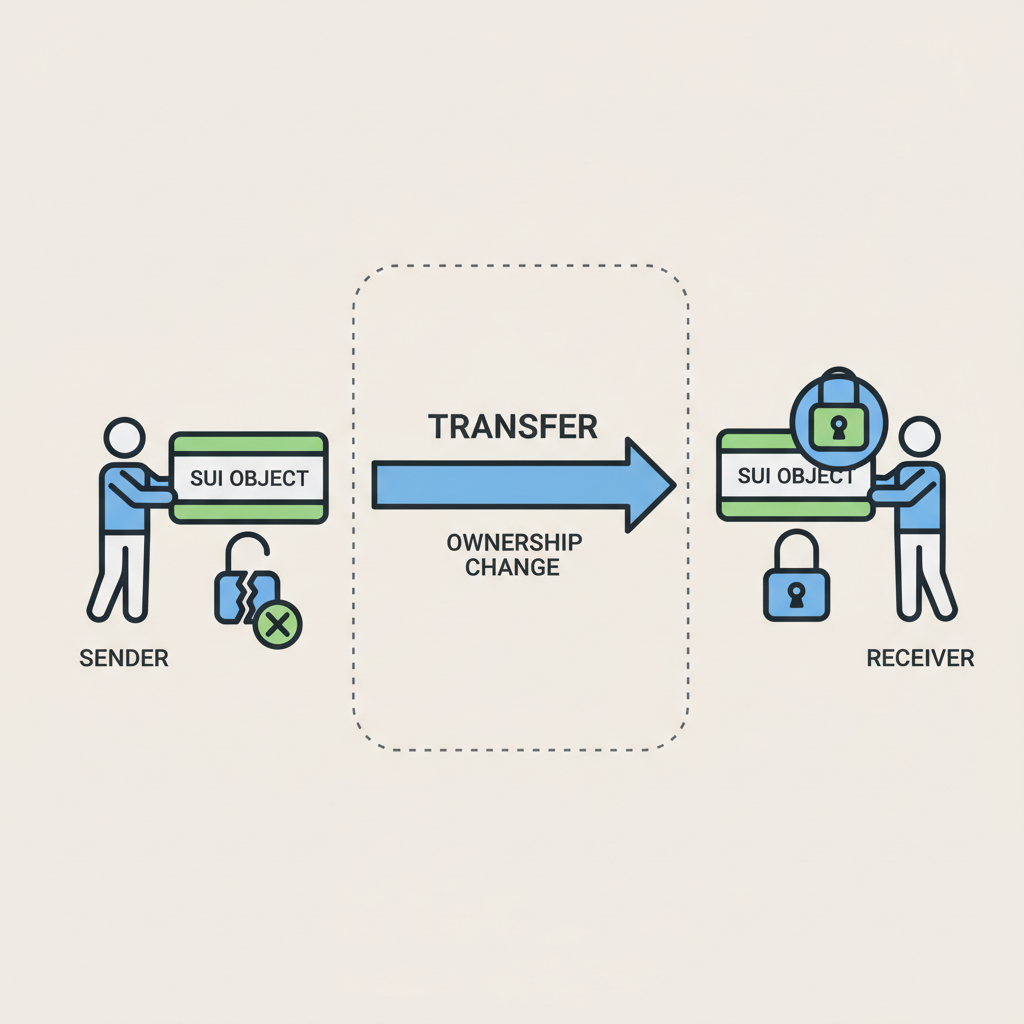

Sui treats on-chain data not as mutable accounts but as autonomous objects. Each object carries a distinct ID for tracking, an owner field enforcing access control, and a type defining its behavior via Move modules. Coins? Objects. NFTs? Objects. Even smart contract states splinter into objects. This granularity shines in move language sui objects: you can’t copy or delete objects accidentally, thanks to linear logic baked into Move. Ownership rules prevent races; an object mutates only under its owner’s transaction or explicit sharing.

Contrast this with account models: updating Alice’s balance blocks Bob’s entirely unrelated NFT mint. Sui’s design lets them fly parallel. Data from Sui’s docs and blogs like Suipiens underline this: parallel processing cuts latency, with simple transactions zipping via fast-path execution while complex ones take the slow path unchallenged by siblings.

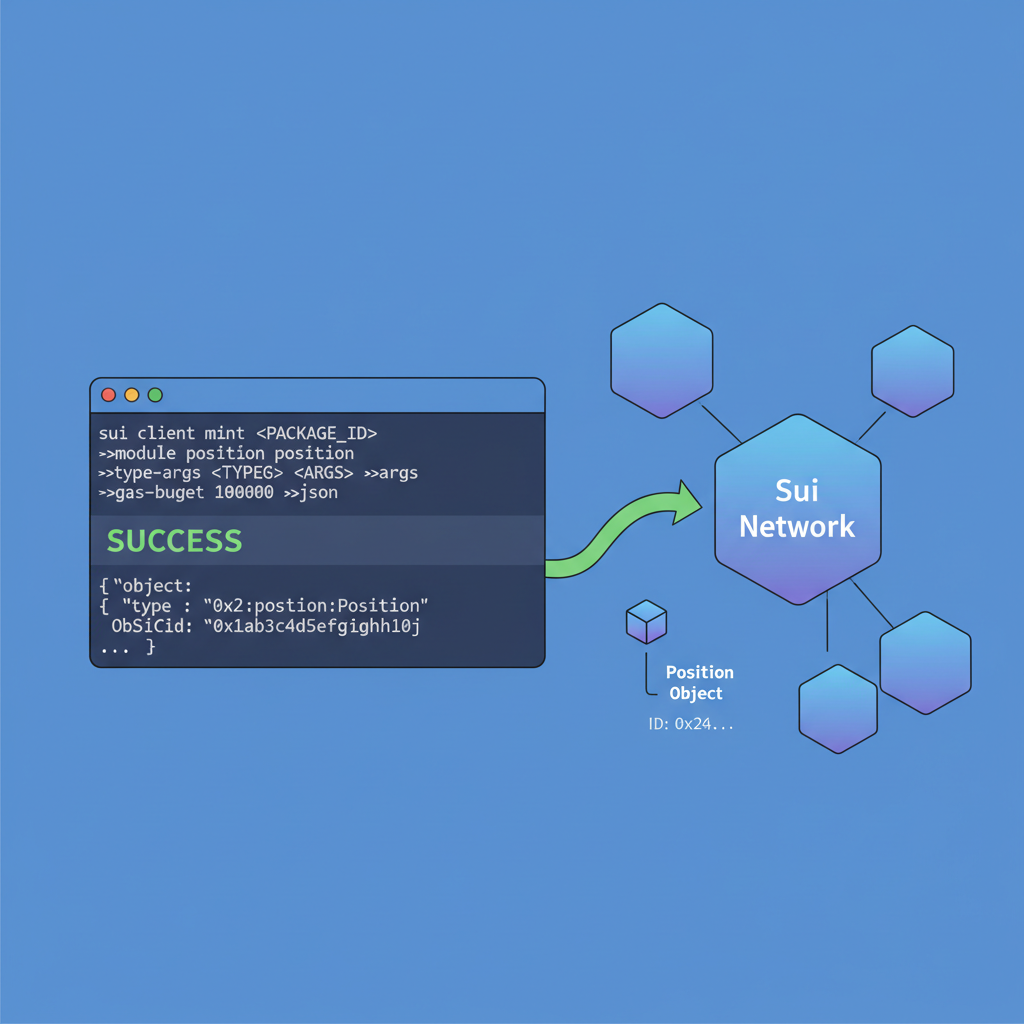

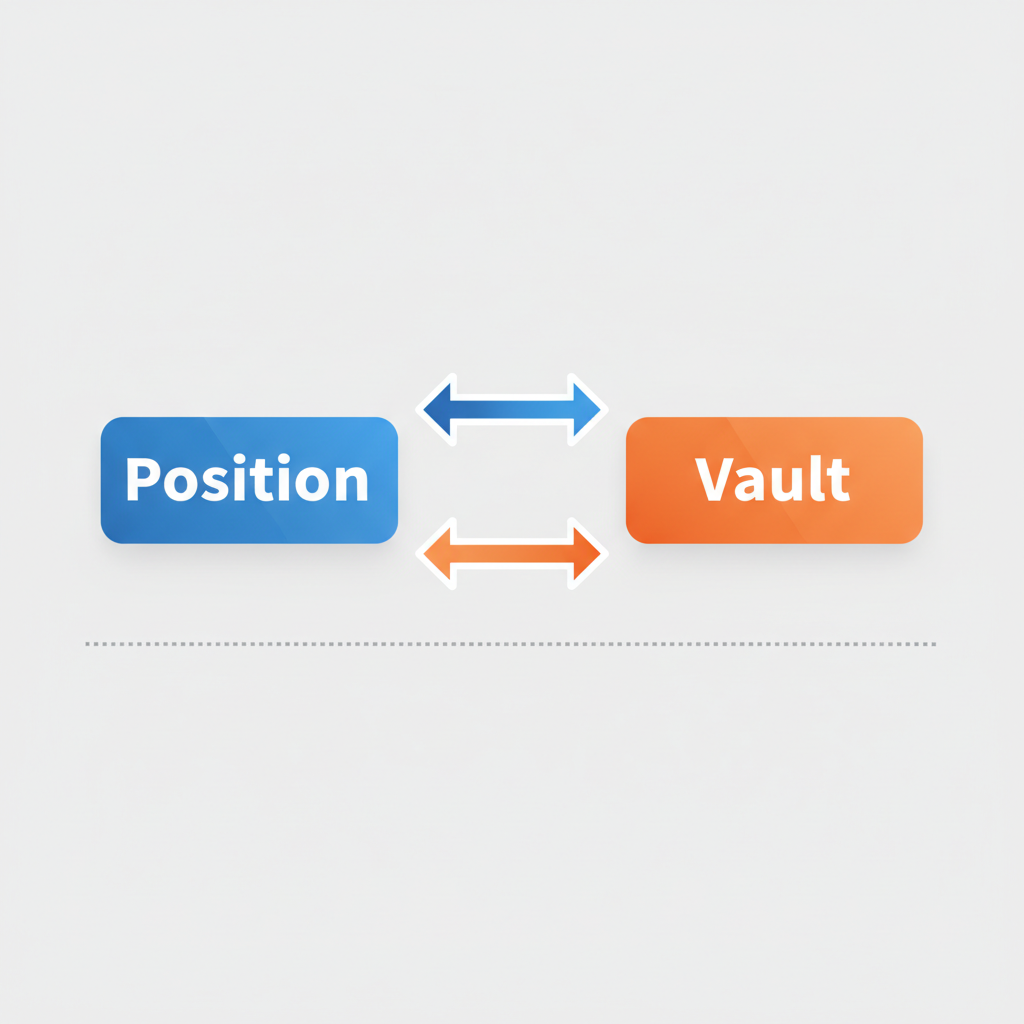

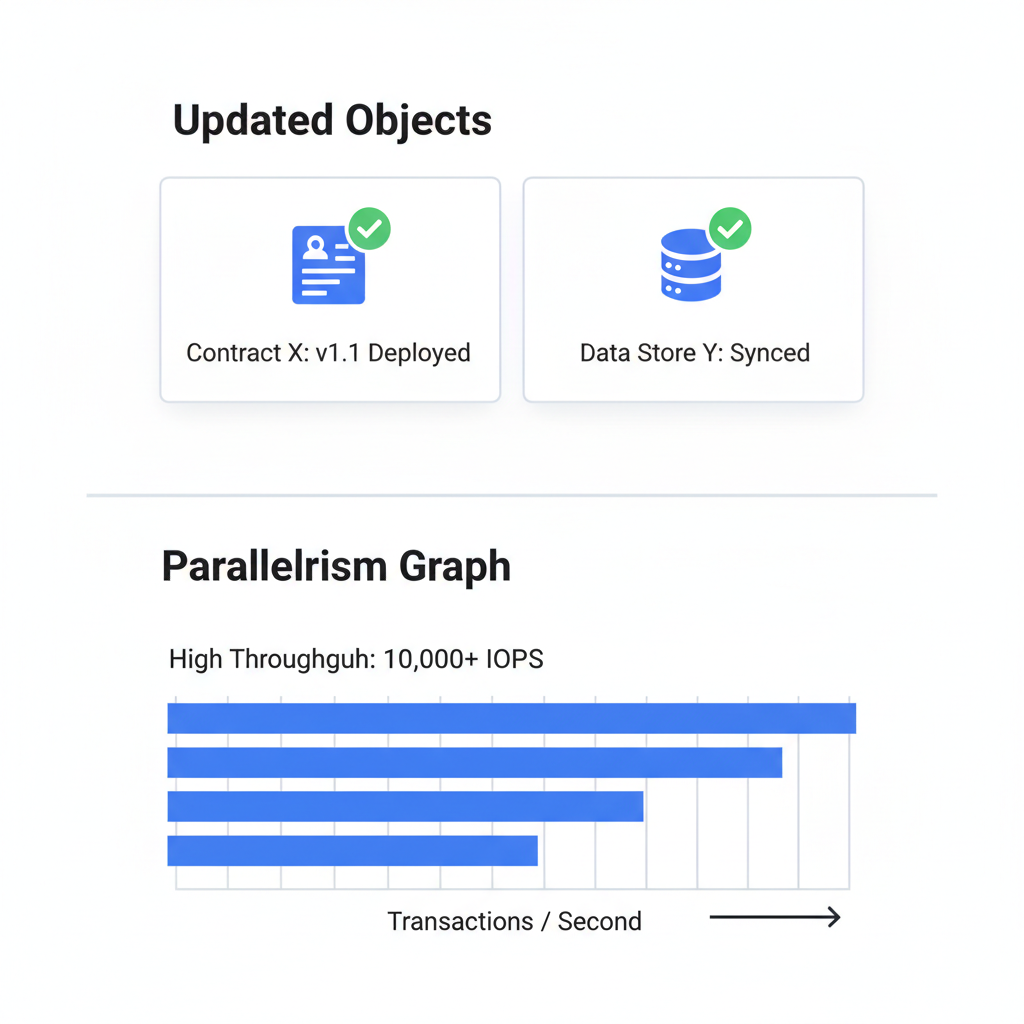

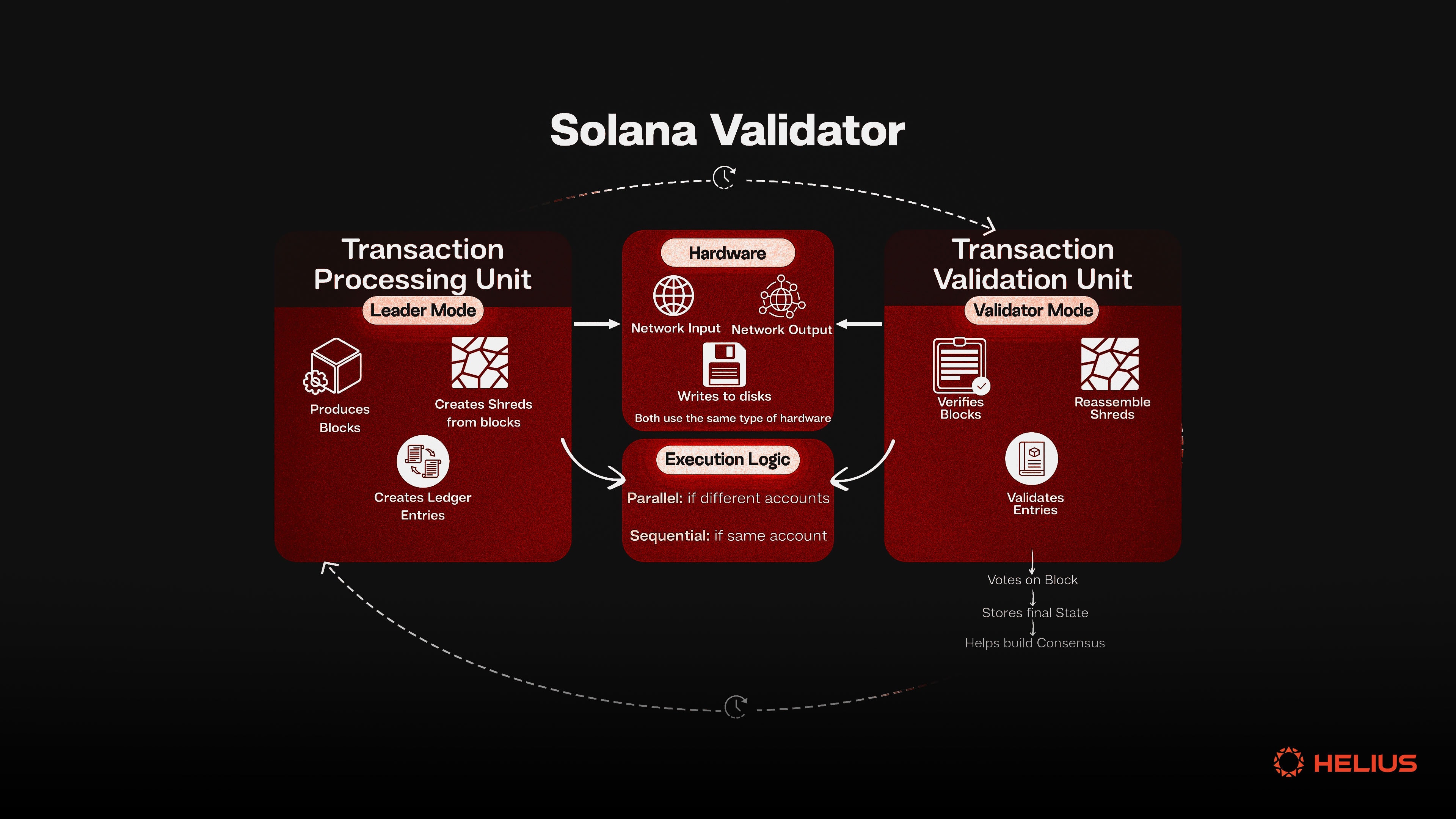

Here’s where Sui’s genius ignites sui parallel transactions. Transactions declare their read/write object sets upfront. If sets don’t overlap, validators execute them concurrently, no global lockstep. This object-centric blockchain sui hallmark delivers sub-second finality for most ops. Take a DEX: User A swaps on Pool X while User B adds liquidity to Pool Y. No interference; both confirm near-instantly. Move enforces safety: shared objects (like pools) serialize via locks, but owned objects (user wallets) parallelize freely. Blogs from Sui and Level Up Coding spotlight dual-path processing, fast for single-owner txns, full VM for multisig or shared. In practice, this parallelism equips trading bots for high-frequency edges; my charts show momentum patterns resolving faster on Sui than competitors, unmarred by mempool wars. To harness this in your sui objects tutorial, design apps around per-user objects. Ditch global state; spawn a Position object per trader, holding their vault, orders, and history. Transfers become simple object locks, executed parallel across users. Move’s syntax is terse: define struct with key ability for top-level objects, store for immutables. Consider a lending protocol. Traditional: single contract state updated sequentially. Sui way: each loan as an object linking borrower/lender positions. Repayments parallelize naturally. Pitfall to dodge: overusing shared objects, which serialize. Metrics from MevX and Gate. io analyses confirm: owned-object heavy designs hit 100k and TPS in tests, dwarfing account chains. Start simple: mint user objects on signup, each encapsulating state. Transactions then specify object IDs precisely, signaling Sui’s engine for maximal concurrency. This isn’t hype; it’s engineered predictability for dApps demanding speed. Real-world benchmarks bear this out. Sui’s testnets have clocked over 120,000 TPS in owned-object workloads, per reports from the Sui Blog and MevX, far outpacing account-based rivals stuck at 1,000-5,000 TPS peaks. This isn’t theoretical; it’s the backbone for bots parsing my chart patterns, executing buys on breakouts across disjoint positions without a hitch. Time to code. In this sui objects tutorial, we’ll mint two Position objects for traders Alice and Bob, then swap assets in parallel. Move’s entry functions expose object IDs explicitly, cueing Sui’s engine for concurrency. Assume a basic Position struct: fields for balance, orders, and ID. First, the module defines our object: Deploy this module via Sui CLI. Mint for Alice: This setup scales linearly with users. Each trader’s Position stays owned, dodging shared locks. My trading scripts leverage this: one bot leg scans momentum on SUI perps across 100 positions simultaneously, front-running signals before competitors’ sequential chains grind to a halt. Avoid the trap of shared-object creep. Pools and oracles must serialize, but minimize: use epochs for oracle reads, per-user escrows for trades. Gate. com analyses show shared-heavy apps drop to 10k TPS; owned designs sustain 100k and. Another: declare effects accurately. Wrong read/write sets force sequential fallback. Pro tip from charts: monitor object contention via Sui Explorer. High locks signal redesign. For bots, batch disjoint txns into one programmable block, amplifying parallelism. Hexn and Webisoft breakdowns confirm: this nets 10x latency wins over Solana’s optimistic parallelism, which balks on conflicts. Security stays ironclad. Move’s borrow checker rejects mutable aliasing; objects drop post-use, preventing ghosts. BDS Blog notes this enables composable dApps without reentrancy woes plaguing EVM. Sui’s object-centric paradigm isn’t a gimmick; it’s a scalpel for tomorrow’s Web3. By atomizing state into independent objects, it liberates transactions to parallelize natively, fueling dApps that hum at internet speed. Whether charting breakouts or settling DeFi, this model turns blockchain from bottleneck to booster. Dive in, mint your first object, and feel the momentum unlock. Crafting Objects for Peak Parallelism in Sui

Hands-On: Executing Parallel TransactionsCode Walkthrough

sui client call --package and lt;PACKAGE_ID and gt; --module position --function mint --gas-budget 10000000. Repeat for Bob. Now, fire parallel txns: Alice transfers to a pool object, Bob to another. Sui’s effects checker sees no overlap; validators parallelize. Logs confirm sub-second confirms, latencies under 400ms even under load, matching Suipiens data. Pitfalls and Pro Tips for Sui Parallelism